From the earliest hardware prototypes to the latest tracking software, the Leap Motion platform has come a long way. We’ve gotten lots of questions about how our technology works, so today we’re taking a look at how raw sensor data is translated into useful information that developers can use in their applications.

Hardware

From a hardware perspective, the Leap Motion Controller is actually quite simple. The heart of the device consists of two stereo cameras and three infrared LEDs. These track infrared light with a wavelength of 850 nanometers, which is outside the visible light spectrum.

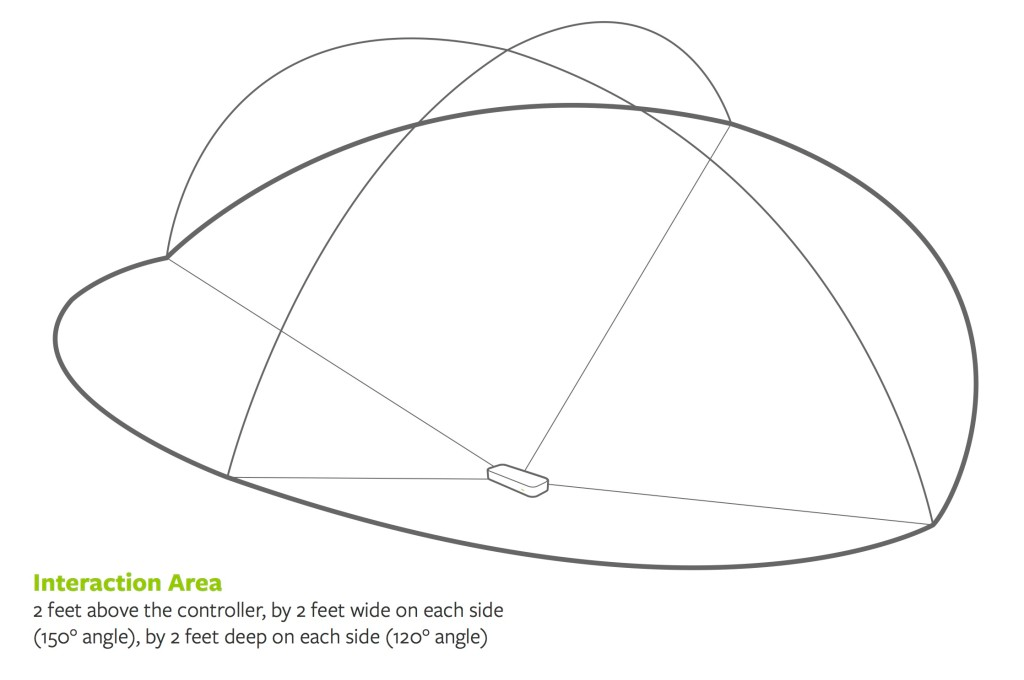

Thanks to its wide angle lenses, the device has a large interaction space of eight cubic feet, which takes the shape of an inverted pyramid – the intersection of the binocular cameras’ fields of view. The Leap Motion Controller’s viewing range is limited to roughly 2 feet (60 cm) above the device. This range is limited by LED light propagation through space, since it becomes much harder to infer your hand’s position in 3D beyond a certain distance. LED light intensity is ultimately limited by the maximum current that can be drawn over the USB connection.

At this point, the device’s USB controller reads the sensor data into its own local memory and performs any necessary resolution adjustments. This data is then streamed via USB to the Leap Motion tracking software.

Because the Leap Motion Controller tracks in near-infrared, the images appear in grayscale. Intense sources or reflectors of infrared light can make hands and fingers hard to distinguish and track. This is something that we’ve significantly improved with our v2 tracking beta, and it’s an ongoing process.

Software

Once the image data is streamed to your computer, it’s time for some heavy mathematical lifting. Despite popular misconceptions, the Leap Motion Controller doesn’t generate a depth map – instead it applies advanced algorithms to the raw sensor data.

The Leap Motion Service is the software on your computer that processes the images. After compensating for background objects (such as heads) and ambient environmental lighting, the images are analyzed to reconstruct a 3D representation of what the device sees.

Next, the tracking layer matches the data to extract tracking information such as fingers and tools. Our tracking algorithms interpret the 3D data and infer the positions of occluded objects. Filtering techniques are applied to ensure smooth temporal coherence of the data. The Leap Motion Service then feeds the results – expressed as a series of frames, or snapshots, containing all of the tracking data – into a transport protocol.

Through this protocol, the service communicates with the Leap Motion Control Panel, as well as native and web client libraries, through a local socket connection (TCP for native, WebSocket for web). The client library organizes the data into an object-oriented API structure, manages frame history, and provides helper functions and classes.

From there, the application logic ties into the Leap Motion input, allowing a motion-controlled interactive experience. Next week, we’ll take a closer look at our SDK and getting started with our API.

李逍遥说说

李逍遥说说