How to convert from the color camera space to the depth camera space in Kinect For Windows

Posted by nsmoly on August 3, 2012

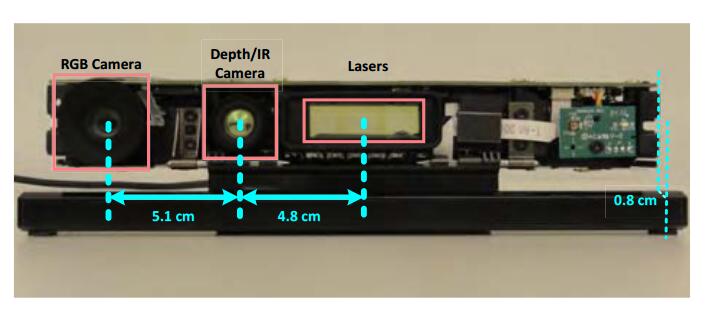

Kinect has 2 cameras – video and depth (IR) and thefore there are 2 different coordinate systems where you can compute things – the depth camera coordinate frame of reference (that Kinect’s skeleton uses and returns results in) and the color camera coordinate system. The Face Tracking API, which we shipped with Kinect For Windows SDK 1.5 developer toolkit, computes results in the color camera coordinate frame since it uses RGB data a lot. To use the face tracking 3D results with Kinect skeleton you may want to convert from the color camera space to depth camera space. Both systems are right handed coordinate systems with Z pointed out (towards a user) and Y pointed UP, but 2 systems do not have the same origin and their axis are not colinear due to camera differences. Therefore you need to convert from one system to another.

Unfortunately, Kinect API does not provide this functionality yet. The proper way to convert between 2 camera spaces is to calibrate these cameras and use their extrinsic parameters for conversion. Unfortunately, Kinect API does not expose those and it does not provide any function that does the conversion. So I came up with the code (below) that can be used to approximately convert from the color camera space to the depth camera space. This code only approximates the “real” conversion, so understand this when using it. The code is provided “as is” with not warranties, use it at your own risk ![]()

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 |

/* This function demonstrates a simplified (and approximate) way of converting from the color camera space to the depth camera space. It takes a 3D point in the color camera space and returns its coordinates in the depth camera space. The algorithm is as follows: 1) take a point in the depth camera space that is near the resulting converted 3D point. As a "good enough approximation" we take the coordinates of the original color camera space point. 2) Project the depth camera space point to (u,v) depth image space 3) Convert depth image (u,v) coordinates to (u',v') color image coordinates with Kinect API 4) Un-projected converted (u',v') color image point to the 3D color camera space (uses known Z from the depth space) 5) Find the translation vector between two spaces as translation = colorCameraSpacePoint - depthCameraSpacePoint 6) Translate the original passed color camera space 3D point by the inverse of the computed translation vector. This algorithm is only a rough approximation and assumes that the transformation between camera spaces is roughly the same in a small neighbourhood of a given point. */ HRESULT ConvertFromColorCameraSpaceToDepthCameraSpace(const XMFLOAT3* pPointInColorCameraSpace, XMFLOAT3* pPointInDepthCameraSpace) { // Camera settings - these should be changed according to camera mode float depthImageWidth = 320.0f; float depthImageHeight = 240.0f; float depthCameraFocalLengthInPixels = NUI_CAMERA_DEPTH_NOMINAL_FOCAL_LENGTH_IN_PIXELS; float colorImageWidth = 640.0f; float colorImageHeight = 480.0f; float colorCameraFocalLengthInPixels = NUI_CAMERA_COLOR_NOMINAL_FOCAL_LENGTH_IN_PIXELS; // Take a point in the depth camera space near the expected resulting point. Here we use the passed color camera space 3D point // We want to convert it from depth camera space back to color camera space to find the shift vector between spaces. Then // we will apply reverse of this vector to go back from the color camera space to the depth camera space XMFLOAT3 depthCameraSpace3DPoint = *pPointInColorCameraSpace; // Project depth camera 3D point (0,0,1) to depth image XMFLOAT2 depthImage2DPoint; depthImage2DPoint.x = depthImageWidth * 0.5f + ( depthCameraSpace3DPoint.x / depthCameraSpace3DPoint.z ) * depthCameraFocalLengthInPixels; depthImage2DPoint.y = depthImageHeight * 0.5f - ( depthCameraSpace3DPoint.y / depthCameraSpace3DPoint.z ) * depthCameraFocalLengthInPixels; // Transform from the depth image space to the color image space POINT colorImage2DPoint; NUI_IMAGE_VIEW_AREA viewArea = { NUI_IMAGE_DIGITAL_ZOOM_1X, 0, 0 }; HRESULT hr = NuiImageGetColorPixelCoordinatesFromDepthPixel( NUI_IMAGE_RESOLUTION_640x480, &viewArea, LONG(depthImage2DPoint.x + 0.5f), LONG(depthImage2DPoint.y+0.5f), USHORT(depthCameraSpace3DPoint.z*1000.0f) << NUI_IMAGE_PLAYER_INDEX_SHIFT, &colorImage2DPoint.x, &colorImage2DPoint.y ); if(FAILED(hr)) { ASSERT(false); return hr; } // Unproject in the color camera space XMFLOAT3 colorCameraSpace3DPoint; colorCameraSpace3DPoint.z = depthCameraSpace3DPoint.z; colorCameraSpace3DPoint.x = (( float(colorImage2DPoint.x) - colorImageWidth*0.5f ) / colorCameraFocalLengthInPixels) * colorCameraSpace3DPoint.z; colorCameraSpace3DPoint.y = ((-float(colorImage2DPoint.y) + colorImageHeight*0.5f ) / colorCameraFocalLengthInPixels) * colorCameraSpace3DPoint.z; // Compute the translation from the depth to color camera spaces XMVECTOR vTranslationFromColorToDepthCameraSpace = XMLoadFloat3(&colorCameraSpace3DPoint) - XMLoadFloat3(&depthCameraSpace3DPoint); // Transform the original color camera 3D point to the depth camera space by using the inverse of the computed shift vector XMVECTOR v3DPointInKinectSkeletonSpace = XMLoadFloat3(pPointInColorCameraSpace) - vTranslationFromColorToDepthCameraSpace; XMStoreFloat3(pPointInDepthCameraSpace, v3DPointInKinectSkeletonSpace); return S_OK; } |

小明编程

小明编程

![[转]matlab自带相机标定程序对kinect进行简单标定-小明编程](https://ws1.sinaimg.cn/large/005HYjuQly1fijj7qtsu3j30c207dq5o.jpg)

![[转]利用Kinect把任意屏幕变得可触控-小明编程](https://brightguo.com/wp-content/uploads/2017/04/o_wite-220x150.png)

![[转]深度图像的获取原理-小明编程](https://brightguo.com/wp-content/uploads/2017/04/20170406100208362-220x150.png)