[2012-10-10]此文章使用的多线程效果很不好,请大家使用微软例子中的waitForMultiObjects方法的内核事件方法。

这二天阅读新的SDK1.5的代码,由于要结合它弄人脸识别问题,所以必须把它的人脸追踪代码研读一下,虽然几经挫折,最终连蒙带猜还是弄出来了。至于怎么用,以及更细节的问题,大家需要自己阅读微软相关文章了。给一个微软相关网站地址点击打开链接。

下面是代码

VS2010+opencv2.3.1+Kinect SDK1.5

驱动什么的,大家自己安装,木有基础的同学先学好基础先。代码是基于我之前更新的SDK1.5基础之上的,如果不太懂,先看之前的文章~

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 486 487 488 489 490 491 492 493 494 495 496 497 498 499 500 501 502 503 504 505 506 507 508 509 510 511 512 513 514 515 516 517 518 519 520 521 522 523 524 525 526 527 528 529 530 531 532 533 534 535 536 537 538 539 540 541 542 543 544 |

// win32_KinectFaceTracking.cpp : 定义控制台应用程序的入口点。 // #include "stdafx.h" //---------------------------------------------------- #define _WINDOWS #include <FaceTrackLib.h> HRESULT VisualizeFaceModel(IFTImage* pColorImg, IFTModel* pModel, FT_CAMERA_CONFIG const* pCameraConfig, FLOAT const* pSUCoef, FLOAT zoomFactor, POINT viewOffset, IFTResult* pAAMRlt, UINT32 color); //---------------------------------------------------- #include <vector> #include <deque> #include <iomanip> #include <stdexcept> #include <string> #include <iostream> #include "opencv2\opencv.hpp" using namespace std; using namespace cv; #include <windows.h> #include <mmsystem.h> #include <assert.h> #include <strsafe.h> #include "NuiApi.h" #define COLOR_WIDTH 640 #define COLOR_HIGHT 480 #define DEPTH_WIDTH 320 #define DEPTH_HIGHT 240 #define SKELETON_WIDTH 640 #define SKELETON_HIGHT 480 #define CHANNEL 3 BYTE buf[DEPTH_WIDTH*DEPTH_HIGHT*CHANNEL]; int drawColor(HANDLE h); int drawDepth(HANDLE h); int drawSkeleton(); //---face tracking------------------------------------------ BYTE *colorBuffer,*depthBuffer; IFTImage* pColorFrame; IFTImage* pDepthFrame; FT_VECTOR3D m_hint3D[2]; //----------------------------------------------------------------------------------- HANDLE h1; HANDLE h3; HANDLE h5; HANDLE h2; HANDLE h4; DWORD WINAPI VideoFunc(LPVOID pParam) { // cout<<"video start!"<<endl; while(TRUE) { if(WaitForSingleObject(h1,INFINITE)==WAIT_OBJECT_0) { drawColor(h2); } // Sleep(10); // cout<<"video"<<endl; } } DWORD WINAPI DepthFunc(LPVOID pParam) { // cout<<"depth start!"<<endl; while(TRUE) { if(WaitForSingleObject(h3,INFINITE)==WAIT_OBJECT_0) { drawDepth(h4); } // Sleep(10); // cout<<"depth"<<endl; } } DWORD WINAPI SkeletonFunc(LPVOID pParam) { // HANDLE h = (HANDLE)pParam; // cout<<"skeleton start!"<<endl; while(TRUE) { if(WaitForSingleObject(h3,INFINITE)==WAIT_OBJECT_0) drawSkeleton(); // Sleep(10); // cout<<"skeleton"<<endl; } } DWORD WINAPI TrackFace(LPVOID pParam) { cout<<"track face start !"<<endl; while(TRUE) { //do something Sleep(16); cout<<"track face"<<endl; } } //----------------------------------------------------------------------------------- int drawColor(HANDLE h) { const NUI_IMAGE_FRAME * pImageFrame = NULL; HRESULT hr = NuiImageStreamGetNextFrame( h, 0, &pImageFrame ); if( FAILED( hr ) ) { cout<<"Get Color Image Frame Failed"<<endl; return -1; } INuiFrameTexture * pTexture = pImageFrame->pFrameTexture; NUI_LOCKED_RECT LockedRect; pTexture->LockRect( 0, &LockedRect, NULL, 0 ); if( LockedRect.Pitch != 0 ) { BYTE * pBuffer = (BYTE*) LockedRect.pBits; colorBuffer = pBuffer; memcpy(pColorFrame->GetBuffer(), PBYTE(LockedRect.pBits), min(pColorFrame->GetBufferSize(), UINT(pTexture->BufferLen()))); Mat temp(COLOR_HIGHT,COLOR_WIDTH,CV_8UC4,pBuffer); imshow("b",temp); waitKey(1); } NuiImageStreamReleaseFrame( h, pImageFrame ); return 0; } int drawDepth(HANDLE h) { const NUI_IMAGE_FRAME * pImageFrame = NULL; HRESULT hr = NuiImageStreamGetNextFrame( h, 0, &pImageFrame ); if( FAILED( hr ) ) { cout<<"Get Depth Image Frame Failed"<<endl; return -1; } INuiFrameTexture * pTexture = pImageFrame->pFrameTexture; NUI_LOCKED_RECT LockedRect; pTexture->LockRect( 0, &LockedRect, NULL, 0 ); if( LockedRect.Pitch != 0 ) { USHORT * pBuff = (USHORT*) LockedRect.pBits; // depthBuffer = pBuff; memcpy(pDepthFrame->GetBuffer(), PBYTE(LockedRect.pBits), min(pDepthFrame->GetBufferSize(), UINT(pTexture->BufferLen()))); for(int i=0;i<DEPTH_WIDTH*DEPTH_HIGHT;i++) { BYTE index = pBuff[i]&0x07; USHORT realDepth = (pBuff[i]&0xFFF8)>>3; BYTE scale = 255 - (BYTE)(256*realDepth/0x0fff); buf[CHANNEL*i] = buf[CHANNEL*i+1] = buf[CHANNEL*i+2] = 0; switch( index ) { case 0: buf[CHANNEL*i]=scale/2; buf[CHANNEL*i+1]=scale/2; buf[CHANNEL*i+2]=scale/2; break; case 1: buf[CHANNEL*i]=scale; break; case 2: buf[CHANNEL*i+1]=scale; break; case 3: buf[CHANNEL*i+2]=scale; break; case 4: buf[CHANNEL*i]=scale; buf[CHANNEL*i+1]=scale; break; case 5: buf[CHANNEL*i]=scale; buf[CHANNEL*i+2]=scale; break; case 6: buf[CHANNEL*i+1]=scale; buf[CHANNEL*i+2]=scale; break; case 7: buf[CHANNEL*i]=255-scale/2; buf[CHANNEL*i+1]=255-scale/2; buf[CHANNEL*i+2]=255-scale/2; break; } } Mat b(DEPTH_HIGHT,DEPTH_WIDTH,CV_8UC3,buf); imshow("depth",b); waitKey(1); } NuiImageStreamReleaseFrame( h, pImageFrame ); return 0; } int drawSkeleton() { NUI_SKELETON_FRAME SkeletonFrame; cv::Point pt[20]; Mat skeletonMat=Mat(SKELETON_HIGHT,SKELETON_WIDTH,CV_8UC3,Scalar(0,0,0)); HRESULT hr = NuiSkeletonGetNextFrame( 0, &SkeletonFrame ); if( FAILED( hr ) ) { cout<<"Get Skeleton Image Frame Failed"<<endl; return -1; } bool bFoundSkeleton = false; for( int i = 0 ; i < NUI_SKELETON_COUNT ; i++ ) { if( SkeletonFrame.SkeletonData[i].eTrackingState == NUI_SKELETON_TRACKED ) { bFoundSkeleton = true; } } // Has skeletons! if( bFoundSkeleton ) { NuiTransformSmooth(&SkeletonFrame,NULL); for( int i = 0 ; i < NUI_SKELETON_COUNT ; i++ ) { if( SkeletonFrame.SkeletonData[i].eTrackingState == NUI_SKELETON_TRACKED ) { for (int j = 0; j < NUI_SKELETON_POSITION_COUNT; j++) { float fx,fy; NuiTransformSkeletonToDepthImage( SkeletonFrame.SkeletonData[i].SkeletonPositions[j], &fx, &fy ); pt[j].x = (int) ( fx * SKELETON_WIDTH )/320; pt[j].y = (int) ( fy * SKELETON_HIGHT )/240; circle(skeletonMat,pt[j],5,CV_RGB(255,0,0)); } cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_HEAD],pt[NUI_SKELETON_POSITION_SHOULDER_CENTER],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_SHOULDER_CENTER],pt[NUI_SKELETON_POSITION_SPINE],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_SPINE],pt[NUI_SKELETON_POSITION_HIP_CENTER],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_HAND_RIGHT],pt[NUI_SKELETON_POSITION_WRIST_RIGHT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_WRIST_RIGHT],pt[NUI_SKELETON_POSITION_ELBOW_RIGHT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_ELBOW_RIGHT],pt[NUI_SKELETON_POSITION_SHOULDER_RIGHT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_SHOULDER_RIGHT],pt[NUI_SKELETON_POSITION_SHOULDER_CENTER],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_SHOULDER_CENTER],pt[NUI_SKELETON_POSITION_SHOULDER_LEFT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_SHOULDER_LEFT],pt[NUI_SKELETON_POSITION_ELBOW_LEFT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_ELBOW_LEFT],pt[NUI_SKELETON_POSITION_WRIST_LEFT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_WRIST_LEFT],pt[NUI_SKELETON_POSITION_HAND_LEFT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_HIP_CENTER],pt[NUI_SKELETON_POSITION_HIP_RIGHT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_HIP_RIGHT],pt[NUI_SKELETON_POSITION_KNEE_RIGHT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_KNEE_RIGHT],pt[NUI_SKELETON_POSITION_ANKLE_RIGHT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_ANKLE_RIGHT],pt[NUI_SKELETON_POSITION_FOOT_RIGHT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_HIP_CENTER],pt[NUI_SKELETON_POSITION_HIP_LEFT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_HIP_LEFT],pt[NUI_SKELETON_POSITION_KNEE_LEFT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_KNEE_LEFT],pt[NUI_SKELETON_POSITION_ANKLE_LEFT],CV_RGB(0,255,0)); cv::line(skeletonMat,pt[NUI_SKELETON_POSITION_ANKLE_LEFT],pt[NUI_SKELETON_POSITION_FOOT_LEFT],CV_RGB(0,255,0)); m_hint3D[0].x=SkeletonFrame.SkeletonData[i].SkeletonPositions[NUI_SKELETON_POSITION_SHOULDER_CENTER].x; m_hint3D[0].y=SkeletonFrame.SkeletonData[i].SkeletonPositions[NUI_SKELETON_POSITION_SHOULDER_CENTER].y; m_hint3D[0].z=SkeletonFrame.SkeletonData[i].SkeletonPositions[NUI_SKELETON_POSITION_SHOULDER_CENTER].z; m_hint3D[1].x=SkeletonFrame.SkeletonData[i].SkeletonPositions[NUI_SKELETON_POSITION_HEAD].x; m_hint3D[1].y=SkeletonFrame.SkeletonData[i].SkeletonPositions[NUI_SKELETON_POSITION_HEAD].y; m_hint3D[1].z=SkeletonFrame.SkeletonData[i].SkeletonPositions[NUI_SKELETON_POSITION_HEAD].z; } } } imshow("skeleton",skeletonMat); waitKey(1); return 0; } int main(int argc,char * argv[]) { //初始化NUI HRESULT hr = NuiInitialize(NUI_INITIALIZE_FLAG_USES_DEPTH_AND_PLAYER_INDEX|NUI_INITIALIZE_FLAG_USES_COLOR|NUI_INITIALIZE_FLAG_USES_SKELETON); if( hr != S_OK ) { cout<<"NuiInitialize failed"<<endl; return hr; } //打开KINECT设备的彩色图信息通道 h1 = CreateEvent( NULL, TRUE, FALSE, NULL ); h2 = NULL; hr = NuiImageStreamOpen(NUI_IMAGE_TYPE_COLOR,NUI_IMAGE_RESOLUTION_640x480, 0, 2, h1, &h2); if( FAILED( hr ) ) { cout<<"Could not open image stream video"<<endl; return hr; } h3 = CreateEvent( NULL, TRUE, FALSE, NULL ); h4 = NULL; hr = NuiImageStreamOpen( NUI_IMAGE_TYPE_DEPTH_AND_PLAYER_INDEX, NUI_IMAGE_RESOLUTION_320x240, 0, 2, h3, &h4); if( FAILED( hr ) ) { cout<<"Could not open depth stream video"<<endl; return hr; } h5 = CreateEvent( NULL, TRUE, FALSE, NULL ); hr = NuiSkeletonTrackingEnable( h5, 0 ); if( FAILED( hr ) ) { cout<<"Could not open skeleton stream video"<<endl; return hr; } HANDLE hThread1,hThread2,hThread3; hThread1 = CreateThread(NULL,0,VideoFunc,h2,0,NULL); hThread2 = CreateThread(NULL,0,DepthFunc,h4,0,NULL); hThread3 = CreateThread(NULL,0,SkeletonFunc,NULL,0,NULL); m_hint3D[0] = FT_VECTOR3D(0, 0, 0); m_hint3D[1] = FT_VECTOR3D(0, 0, 0); pColorFrame = FTCreateImage(); pDepthFrame = FTCreateImage(); IFTFaceTracker* pFT = FTCreateFaceTracker(); if(!pFT) { return -1;// Handle errors } FT_CAMERA_CONFIG myCameraConfig = {640, 480, NUI_CAMERA_COLOR_NOMINAL_FOCAL_LENGTH_IN_PIXELS}; // width, height, focal length FT_CAMERA_CONFIG depthConfig; depthConfig.FocalLength = NUI_CAMERA_DEPTH_NOMINAL_FOCAL_LENGTH_IN_PIXELS; depthConfig.Width = 320; depthConfig.Height = 240;//貌似这里一定要填,而且要填对才行!! hr = pFT->Initialize(&myCameraConfig, &depthConfig, NULL, NULL); if( FAILED(hr) ) { return -2;// Handle errors } // Create IFTResult to hold a face tracking result IFTResult* pFTResult = NULL; hr = pFT->CreateFTResult(&pFTResult); if(FAILED(hr)) { return -11; } // prepare Image and SensorData for 640x480 RGB images if(!pColorFrame) { return -12;// Handle errors } // Attach assumes that the camera code provided by the application // is filling the buffer cameraFrameBuffer // pColorFrame->Attach(640, 480, colorBuffer, FTIMAGEFORMAT_UINT8_B8G8R8X8, 640*3); hr = pColorFrame->Allocate(640, 480, FTIMAGEFORMAT_UINT8_B8G8R8X8); if (FAILED(hr)) { return hr; } hr = pDepthFrame->Allocate(320, 240, FTIMAGEFORMAT_UINT16_D13P3); if (FAILED(hr)) { return hr; } FT_SENSOR_DATA sensorData; sensorData.pVideoFrame = pColorFrame; sensorData.pDepthFrame = pDepthFrame; sensorData.ZoomFactor = 1.0f; POINT point;point.x=0;point.y=0; sensorData.ViewOffset = point; bool isTracked = false; int iFaceTrackTimeCount=0; // Track a face while ( true ) { // Call your camera method to process IO and fill the camera buffer // cameraObj.ProcessIO(cameraFrameBuffer); // replace with your method if(!isTracked) { hr = pFT->StartTracking(&sensorData, NULL, m_hint3D, pFTResult); if(SUCCEEDED(hr) && SUCCEEDED(pFTResult->GetStatus())) { isTracked = true; } else { // Handle errors isTracked = false; } } else { // Continue tracking. It uses a previously known face position, // so it is an inexpensive call. hr = pFT->ContinueTracking(&sensorData, m_hint3D, pFTResult); if(FAILED(hr) || FAILED (pFTResult->GetStatus())) { // Handle errors isTracked = false; } } if(isTracked) {printf("被跟踪了!!!!!!!!!!!!!!!\n"); IFTModel* ftModel; HRESULT hr = pFT->GetFaceModel(&ftModel); FLOAT* pSU = NULL; UINT numSU; BOOL suConverged; pFT->GetShapeUnits(NULL, &pSU, &numSU, &suConverged); POINT viewOffset = {0, 0}; hr = VisualizeFaceModel(pColorFrame, ftModel, &myCameraConfig, pSU, 1.0, viewOffset, pFTResult, 0x00FFFF00); if(FAILED(hr)) printf("显示失败!!\n"); Mat tempMat(COLOR_HIGHT,COLOR_WIDTH,CV_8UC4,pColorFrame->GetBuffer()); imshow("faceTracking",tempMat); waitKey(1); } //printf("%d\n",pFTResult->GetStatus()); // Do something with pFTResult. Sleep(16); iFaceTrackTimeCount++; if(iFaceTrackTimeCount>16*1000) break; // Terminate on some criteria. } // Clean up. pFTResult->Release(); pColorFrame->Release(); pFT->Release(); CloseHandle(hThread1); CloseHandle(hThread2); CloseHandle(hThread3); Sleep(60000); NuiShutdown(); return 0; } HRESULT VisualizeFaceModel(IFTImage* pColorImg, IFTModel* pModel, FT_CAMERA_CONFIG const* pCameraConfig, FLOAT const* pSUCoef, FLOAT zoomFactor, POINT viewOffset, IFTResult* pAAMRlt, UINT32 color) { if (!pColorImg || !pModel || !pCameraConfig || !pSUCoef || !pAAMRlt) { return E_POINTER; } HRESULT hr = S_OK; UINT vertexCount = pModel->GetVertexCount(); FT_VECTOR2D* pPts2D = reinterpret_cast<FT_VECTOR2D*>(_malloca(sizeof(FT_VECTOR2D) * vertexCount)); if (pPts2D) { FLOAT *pAUs; UINT auCount; hr = pAAMRlt->GetAUCoefficients(&pAUs, &auCount); if (SUCCEEDED(hr)) { FLOAT scale, rotationXYZ[3], translationXYZ[3]; hr = pAAMRlt->Get3DPose(&scale, rotationXYZ, translationXYZ); if (SUCCEEDED(hr)) { hr = pModel->GetProjectedShape(pCameraConfig, zoomFactor, viewOffset, pSUCoef, pModel->GetSUCount(), pAUs, auCount, scale, rotationXYZ, translationXYZ, pPts2D, vertexCount); if (SUCCEEDED(hr)) { POINT* p3DMdl = reinterpret_cast<POINT*>(_malloca(sizeof(POINT) * vertexCount)); if (p3DMdl) { for (UINT i = 0; i < vertexCount; ++i) { p3DMdl[i].x = LONG(pPts2D[i].x + 0.5f); p3DMdl[i].y = LONG(pPts2D[i].y + 0.5f); } FT_TRIANGLE* pTriangles; UINT triangleCount; hr = pModel->GetTriangles(&pTriangles, &triangleCount); if (SUCCEEDED(hr)) { struct EdgeHashTable { UINT32* pEdges; UINT edgesAlloc; void Insert(int a, int b) { UINT32 v = (min(a, b) << 16) | max(a, b); UINT32 index = (v + (v << 8)) * 49157, i; for (i = 0; i < edgesAlloc - 1 && pEdges[(index + i) & (edgesAlloc - 1)] && v != pEdges[(index + i) & (edgesAlloc - 1)]; ++i) { } pEdges[(index + i) & (edgesAlloc - 1)] = v; } } eht; eht.edgesAlloc = 1 << UINT(log(2.f * (1 + vertexCount + triangleCount)) / log(2.f)); eht.pEdges = reinterpret_cast<UINT32*>(_malloca(sizeof(UINT32) * eht.edgesAlloc)); if (eht.pEdges) { ZeroMemory(eht.pEdges, sizeof(UINT32) * eht.edgesAlloc); for (UINT i = 0; i < triangleCount; ++i) { eht.Insert(pTriangles[i].i, pTriangles[i].j); eht.Insert(pTriangles[i].j, pTriangles[i].k); eht.Insert(pTriangles[i].k, pTriangles[i].i); } for (UINT i = 0; i < eht.edgesAlloc; ++i) { if(eht.pEdges[i] != 0) { pColorImg->DrawLine(p3DMdl[eht.pEdges[i] >> 16], p3DMdl[eht.pEdges[i] & 0xFFFF], color, 1); } } _freea(eht.pEdges); } // Render the face rect in magenta RECT rectFace; hr = pAAMRlt->GetFaceRect(&rectFace); if (SUCCEEDED(hr)) { POINT leftTop = {rectFace.left, rectFace.top}; POINT rightTop = {rectFace.right - 1, rectFace.top}; POINT leftBottom = {rectFace.left, rectFace.bottom - 1}; POINT rightBottom = {rectFace.right - 1, rectFace.bottom - 1}; UINT32 nColor = 0xff00ff; SUCCEEDED(hr = pColorImg->DrawLine(leftTop, rightTop, nColor, 1)) && SUCCEEDED(hr = pColorImg->DrawLine(rightTop, rightBottom, nColor, 1)) && SUCCEEDED(hr = pColorImg->DrawLine(rightBottom, leftBottom, nColor, 1)) && SUCCEEDED(hr = pColorImg->DrawLine(leftBottom, leftTop, nColor, 1)); } } _freea(p3DMdl); } else { hr = E_OUTOFMEMORY; } } } } _freea(pPts2D); } else { hr = E_OUTOFMEMORY; } return hr; } |

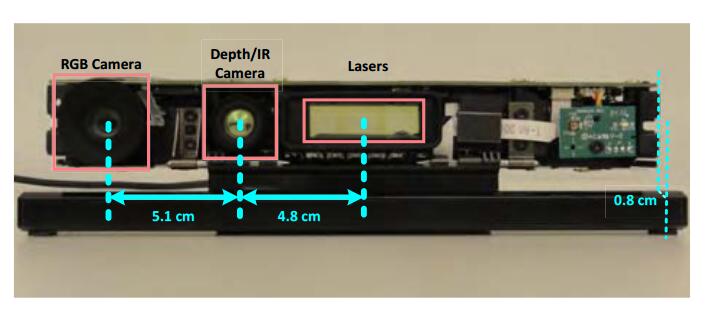

最后给个图片

[url href=”http://download.csdn.net/detail/guoming0000/4333334″]项目下载[/url]

李逍遥说说

李逍遥说说

![[转]matlab自带相机标定程序对kinect进行简单标定-李逍遥说说](https://ws1.sinaimg.cn/large/005HYjuQly1fijj7qtsu3j30c207dq5o.jpg)

![[转]利用Kinect把任意屏幕变得可触控-李逍遥说说](http://brightguo.com/wp-content/uploads/2017/04/o_wite-220x150.png)

![[转]深度图像的获取原理-李逍遥说说](http://brightguo.com/wp-content/uploads/2017/04/20170406100208362-220x150.png)