这次给大家分享下我自己写的KinectV2的OpenCV项目,可以用于研究KinectV2图像算法的人。项目地址在我的Github页面。另外还有更复杂的OpenCV显示KinectV2人脸追踪的项目,适用于研究人脸追踪Face Tracking、人脸识别Face Recognition、人脸特征点提取Face Alignment,就不提供源码了,有需要请去我的淘宝店询问后购买。

相对于我以前给出的OpenCV程序,这个程序的好处是不用配置OpenCV环境,直接可以编译运行。不了解如何做到这点的同学,可以下载学习下这种配置方法。欢迎,留言或分享你的代码。

程序适用人群

- 学术研究、图像处理算法研究者

程序功能:

- 使用OpenCV显示KinectV2数据

开发环境:

- win32控制台应用程序 x86程序 (程序类型)

- VisualStudio 2013 (开发工具)

- KinectSDK-v2.0-PublicPreview1409-Setup (Kinect SDK驱动版本, http://brightguo.com/kinect2)

- Windows 8.1 (操作系统)

- OpenCV2.4.10

这里列出最重要的两个文件,完整项目请去Github上查看:

Kinect.h

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 |

#pragma once #include <Kinect.h>// Kinect Header files #include "include_opencv/opencv2/opencv.hpp" using namespace cv; // Safe release for interfaces template<class Interface> inline void SafeRelease(Interface *& pInterfaceToRelease) { if (pInterfaceToRelease != NULL) { pInterfaceToRelease->Release(); pInterfaceToRelease = NULL; } } //定义Kinect方法类 class CKinect { public: static const int cDepthWidth = 512; static const int cDepthHeight = 424; static const int cColorWidth = 1920; static const int cColorHeight = 1080; CKinect(); ~CKinect(); HRESULT InitKinect();//初始化Kinect void Update();//更新数据 void ProcessFrame(INT64 nTime, const UINT16* pDepthBuffer, int nDepthHeight, int nDepthWidth, USHORT nMinDepth, USHORT nMaxDepth, const RGBQUAD* pColorBuffer, int nColorWidth, int nColorHeight, int nBodyCount, IBody** ppBodies, const BYTE* pBodyIndexBuffer, int nBodyIndexWidth, int nBodyIndexHeight); private: IKinectSensor* m_pKinectSensor;// Current Kinect // Frame reader IMultiSourceFrameReader*m_pMultiSourceFrameReader; ICoordinateMapper* m_pCoordinateMapper; DepthSpacePoint* m_pDepthCoordinates; RGBQUAD* m_pOutputRGBX; RGBQUAD* m_pColorRGBX; RGBQUAD* m_pBackgroundRGBX; RGBQUAD* m_pDepthRGBX; Mat m_Depth; Mat m_Color; Mat m_BodyIndex; }; |

Kinect.cpp

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 |

#include "stdafx.h" #include "Kinect.h" #include "Head.h" #include <math.h> #include <limits> #include <strsafe.h> static const DWORD c_AppRunTime = 5 * 60;//程序运行时间(s),设置5*60表示运行5分钟后程序自动关闭 static const float c_JointThickness = 3.0f; static const float c_TrackedBoneThickness = 6.0f; static const float c_InferredBoneThickness = 1.0f; static const float c_HandSize = 30.0f; CKinect::CKinect() { m_pKinectSensor = NULL; m_pCoordinateMapper = NULL; m_pMultiSourceFrameReader = NULL; m_pDepthCoordinates = NULL; m_pOutputRGBX = NULL; m_pBackgroundRGBX = NULL; m_pColorRGBX = NULL; // create heap storage for composite image pixel data in RGBX format m_pOutputRGBX = new RGBQUAD[cColorWidth * cColorHeight]; // create heap storage for background image pixel data in RGBX format m_pBackgroundRGBX = new RGBQUAD[cColorWidth * cColorHeight]; // create heap storage for color pixel data in RGBX format m_pColorRGBX = new RGBQUAD[cColorWidth * cColorHeight]; // create heap storage for the coorinate mapping from color to depth m_pDepthCoordinates = new DepthSpacePoint[cColorWidth * cColorHeight]; m_pDepthRGBX = new RGBQUAD[cDepthWidth * cDepthHeight];// create heap storage for color pixel data in RGBX format //初始化OpenCV数组 m_Depth.create(cDepthHeight, cDepthWidth, CV_16UC1); m_Color.create(cColorHeight, cColorWidth, CV_8UC4); m_BodyIndex.create(cDepthHeight, cDepthWidth, CV_8UC1); } CKinect::~CKinect() { SAFE_RELEASE_VEC(m_pOutputRGBX); SAFE_RELEASE_VEC(m_pBackgroundRGBX); SAFE_RELEASE_VEC(m_pColorRGBX); SAFE_RELEASE_VEC(m_pDepthCoordinates); SAFE_RELEASE_VEC(m_pDepthRGBX); // done with frame reader SafeRelease(m_pMultiSourceFrameReader); // done with coordinate mapper SafeRelease(m_pCoordinateMapper); // close the Kinect Sensor if (m_pKinectSensor) { m_pKinectSensor->Close(); } SafeRelease(m_pKinectSensor); } HRESULT CKinect::InitKinect() { HRESULT hr; hr = GetDefaultKinectSensor(&m_pKinectSensor); if (FAILED(hr)) { return hr; } if (m_pKinectSensor) { // Initialize the Kinect and get coordinate mapper and the frame reader if (SUCCEEDED(hr)) { hr = m_pKinectSensor->get_CoordinateMapper(&m_pCoordinateMapper); } hr = m_pKinectSensor->Open(); if (SUCCEEDED(hr)) { hr = m_pKinectSensor->OpenMultiSourceFrameReader( FrameSourceTypes::FrameSourceTypes_Depth | FrameSourceTypes::FrameSourceTypes_Color | FrameSourceTypes::FrameSourceTypes_Body | FrameSourceTypes::FrameSourceTypes_BodyIndex, &m_pMultiSourceFrameReader); // hr = m_pKinectSensor->OpenMultiSourceFrameReader(FrameSourceTypes::FrameSourceTypes_Color , &m_pMultiSourceFrameReader); } } if (!m_pKinectSensor || FAILED(hr)) { return E_FAIL; } return hr; } void CKinect::Update() { if (!m_pMultiSourceFrameReader) { return; } IMultiSourceFrame* pMultiSourceFrame = NULL; IDepthFrame* pDepthFrame = NULL; IColorFrame* pColorFrame = NULL; IBodyIndexFrame* pBodyIndexFrame = NULL; IBodyFrame *pBodyFrame = NULL; HRESULT hr = m_pMultiSourceFrameReader->AcquireLatestFrame(&pMultiSourceFrame); if (SUCCEEDED(hr))//深度信息 { IDepthFrameReference* pDepthFrameReference = NULL; hr = pMultiSourceFrame->get_DepthFrameReference(&pDepthFrameReference); if (SUCCEEDED(hr)) { hr = pDepthFrameReference->AcquireFrame(&pDepthFrame); } SafeRelease(pDepthFrameReference); } if (SUCCEEDED(hr))//彩色信息 { IColorFrameReference* pColorFrameReference = NULL; hr = pMultiSourceFrame->get_ColorFrameReference(&pColorFrameReference); if (SUCCEEDED(hr)) { hr = pColorFrameReference->AcquireFrame(&pColorFrame); } SafeRelease(pColorFrameReference); } if (SUCCEEDED(hr))//骨骼信息 { IBodyFrameReference* pBodyFrameReference = NULL; hr = pMultiSourceFrame->get_BodyFrameReference(&pBodyFrameReference); if (SUCCEEDED(hr)) { hr = pBodyFrameReference->AcquireFrame(&pBodyFrame); } SafeRelease(pBodyFrameReference); } if (SUCCEEDED(hr))//人体掩膜部分 { IBodyIndexFrameReference* pBodyIndexFrameReference = NULL; hr = pMultiSourceFrame->get_BodyIndexFrameReference(&pBodyIndexFrameReference); if (SUCCEEDED(hr)) { hr = pBodyIndexFrameReference->AcquireFrame(&pBodyIndexFrame); } SafeRelease(pBodyIndexFrameReference); } if (SUCCEEDED(hr)) { INT64 nDepthTime = 0; IFrameDescription* pDepthFrameDescription = NULL; int nDepthWidth = 0; int nDepthHeight = 0; UINT nDepthBufferSize = 0; UINT16 *pDepthBuffer = NULL; USHORT nDepthMinReliableDistance = 0; USHORT nDepthMaxDistance = 0; IFrameDescription* pColorFrameDescription = NULL; int nColorWidth = 0; int nColorHeight = 0; ColorImageFormat imageFormat = ColorImageFormat_None; UINT nColorBufferSize = 0; RGBQUAD *pColorBuffer = NULL; IBody* ppBodies[BODY_COUNT] = { 0 }; IFrameDescription* pBodyIndexFrameDescription = NULL; int nBodyIndexWidth = 0; int nBodyIndexHeight = 0; UINT nBodyIndexBufferSize = 0; BYTE *pBodyIndexBuffer = NULL; // get depth frame data hr = pDepthFrame->get_RelativeTime(&nDepthTime); if (SUCCEEDED(hr)) { hr = pDepthFrame->get_FrameDescription(&pDepthFrameDescription); } if (SUCCEEDED(hr)) { hr = pDepthFrameDescription->get_Width(&nDepthWidth); } if (SUCCEEDED(hr)) { hr = pDepthFrameDescription->get_Height(&nDepthHeight); } if (SUCCEEDED(hr)) { hr = pDepthFrame->get_DepthMinReliableDistance(&nDepthMinReliableDistance); } if (SUCCEEDED(hr)) { nDepthMaxDistance = USHRT_MAX; } if (SUCCEEDED(hr)) { hr = pDepthFrame->AccessUnderlyingBuffer(&nDepthBufferSize, &pDepthBuffer); } m_Depth = Mat(nDepthHeight, nDepthWidth, CV_16UC1, pDepthBuffer).clone();/////////////// // get color frame data if (SUCCEEDED(hr)) { hr = pColorFrame->get_FrameDescription(&pColorFrameDescription); } if (SUCCEEDED(hr)) { hr = pColorFrameDescription->get_Width(&nColorWidth); } if (SUCCEEDED(hr)) { hr = pColorFrameDescription->get_Height(&nColorHeight); } if (SUCCEEDED(hr)) { hr = pColorFrame->get_RawColorImageFormat(&imageFormat); } if (SUCCEEDED(hr)) { if (imageFormat == ColorImageFormat_Bgra) { hr = pColorFrame->AccessRawUnderlyingBuffer(&nColorBufferSize, reinterpret_cast<BYTE**>(&pColorBuffer)); } else if (m_pColorRGBX) { pColorBuffer = m_pColorRGBX; nColorBufferSize = cColorWidth * cColorHeight * sizeof(RGBQUAD); hr = pColorFrame->CopyConvertedFrameDataToArray(nColorBufferSize, reinterpret_cast<BYTE*>(pColorBuffer), ColorImageFormat_Bgra); } else { hr = E_FAIL; } } m_Color = Mat(nColorHeight, nColorWidth, CV_8UC4, pColorBuffer);/////////////// if (SUCCEEDED(hr)) { hr = pBodyFrame->GetAndRefreshBodyData(_countof(ppBodies), ppBodies); } // get body index frame data if (SUCCEEDED(hr)) { hr = pBodyIndexFrame->get_FrameDescription(&pBodyIndexFrameDescription); } if (SUCCEEDED(hr)) { hr = pBodyIndexFrameDescription->get_Width(&nBodyIndexWidth); } if (SUCCEEDED(hr)) { hr = pBodyIndexFrameDescription->get_Height(&nBodyIndexHeight); } if (SUCCEEDED(hr)) { hr = pBodyIndexFrame->AccessUnderlyingBuffer(&nBodyIndexBufferSize, &pBodyIndexBuffer); } m_BodyIndex = Mat(nBodyIndexHeight, nBodyIndexWidth, CV_8UC1, pBodyIndexBuffer); if (SUCCEEDED(hr)) { ProcessFrame(nDepthTime, pDepthBuffer, nDepthWidth, nDepthHeight, nDepthMinReliableDistance, nDepthMaxDistance, pColorBuffer, nColorWidth, nColorHeight, BODY_COUNT, ppBodies, pBodyIndexBuffer, nBodyIndexWidth, nBodyIndexHeight); } SafeRelease(pDepthFrameDescription); SafeRelease(pColorFrameDescription); SafeRelease(pBodyIndexFrameDescription); for (int i = 0; i < _countof(ppBodies); ++i) { SafeRelease(ppBodies[i]); } } SafeRelease(pDepthFrame); SafeRelease(pColorFrame); SafeRelease(pBodyFrame); SafeRelease(pBodyIndexFrame); SafeRelease(pMultiSourceFrame); } void CKinect::ProcessFrame(INT64 nTime, const UINT16* pDepthBuffer, int nDepthWidth, int nDepthHeight, USHORT nMinDepth, USHORT nMaxDepth, const RGBQUAD* pColorBuffer, int nColorWidth, int nColorHeight, int nBodyCount, IBody** ppBodies, const BYTE* pBodyIndexBuffer, int nBodyIndexWidth, int nBodyIndexHeight) { LARGE_INTEGER qpcNow = { 0 }; WCHAR szStatusMessage[64]; // Make sure we've received valid data if (m_pCoordinateMapper && m_pDepthCoordinates && m_pOutputRGBX && pDepthBuffer && (nDepthWidth == cDepthWidth) && (nDepthHeight == cDepthHeight) && pColorBuffer && (nColorWidth == cColorWidth) && (nColorHeight == cColorHeight) && pBodyIndexBuffer && (nBodyIndexWidth == cDepthWidth) && (nBodyIndexHeight == cDepthHeight) && m_pDepthRGBX) { HRESULT hr = m_pCoordinateMapper->MapColorFrameToDepthSpace(nDepthWidth * nDepthHeight, (UINT16*)pDepthBuffer, nColorWidth * nColorHeight, m_pDepthCoordinates); if (FAILED(hr)) { return; } // loop over output pixels for (int colorIndex = 0; colorIndex < (nColorWidth*nColorHeight); ++colorIndex) { // default setting source to copy from the background pixel const RGBQUAD* pSrc = m_pBackgroundRGBX + colorIndex; DepthSpacePoint p = m_pDepthCoordinates[colorIndex]; // Values that are negative infinity means it is an invalid color to depth mapping so we // skip processing for this pixel if (p.X != -std::numeric_limits<float>::infinity() && p.Y != -std::numeric_limits<float>::infinity()) { int depthX = static_cast<int>(p.X + 0.5f); int depthY = static_cast<int>(p.Y + 0.5f); if ((depthX >= 0 && depthX < nDepthWidth) && (depthY >= 0 && depthY < nDepthHeight)) { BYTE player = pBodyIndexBuffer[depthX + (depthY * cDepthWidth)]; // if we're tracking a player for the current pixel, draw from the color camera if (player != 0xff) { // set source for copy to the color pixel pSrc = m_pColorRGBX + colorIndex; } } } // write output m_pOutputRGBX[colorIndex] = *pSrc; } }//确保参数都准确 //imshow("color", m_Color); Mat showImage; resize(m_Color, showImage, Size(cColorWidth / 2, cColorHeight / 2)); imshow("Color", showImage);////imshow("ColorImage", ColorImage); imshow("Depth", m_Depth); imshow("BodyIndex", m_BodyIndex); RGBQUAD* pRGBX = m_pDepthRGBX; // end pixel is start + width*height - 1 const UINT16* pBufferEnd = pDepthBuffer + (nDepthWidth * nDepthHeight); while (pDepthBuffer < pBufferEnd) { USHORT depth = *pDepthBuffer; BYTE intensity = static_cast<BYTE>((depth >= nMinDepth) && (depth <= nMaxDepth) ? (depth % 256) : 0); pRGBX->rgbRed = intensity; pRGBX->rgbGreen = intensity; pRGBX->rgbBlue = intensity; ++pRGBX; ++pDepthBuffer; } // Draw the data nDepthHeight OpenCV Mat DepthImage(nDepthHeight, nDepthWidth, CV_8UC4, m_pDepthRGBX); Mat show = DepthImage.clone(); imshow("DepthImage", show); waitKey(1); } |

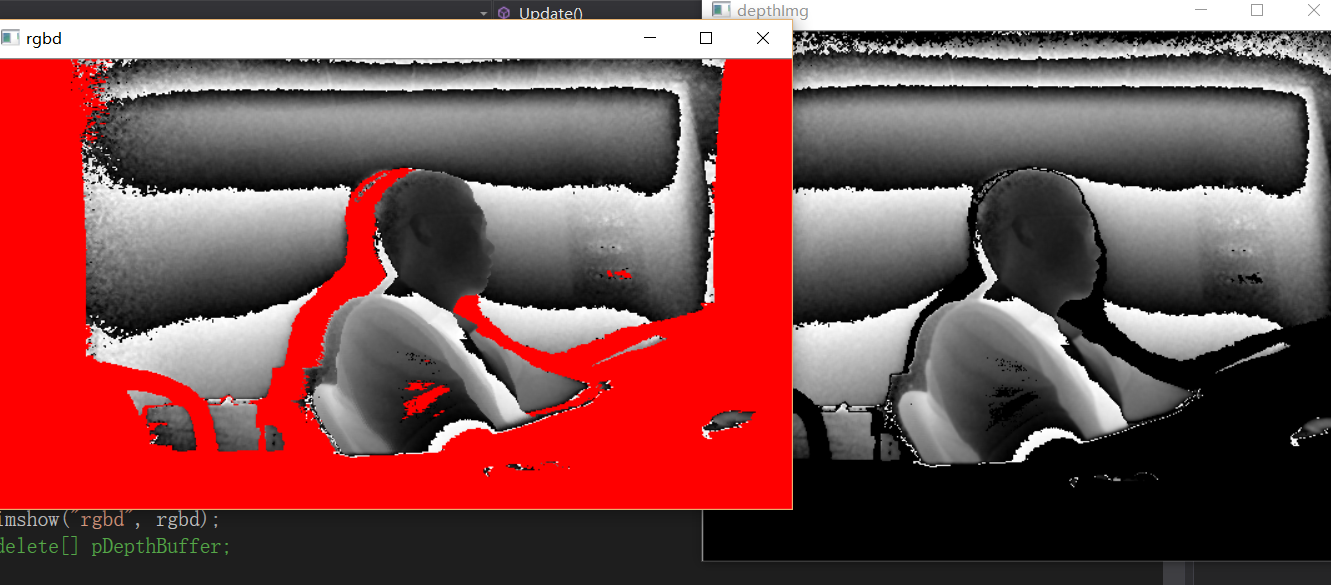

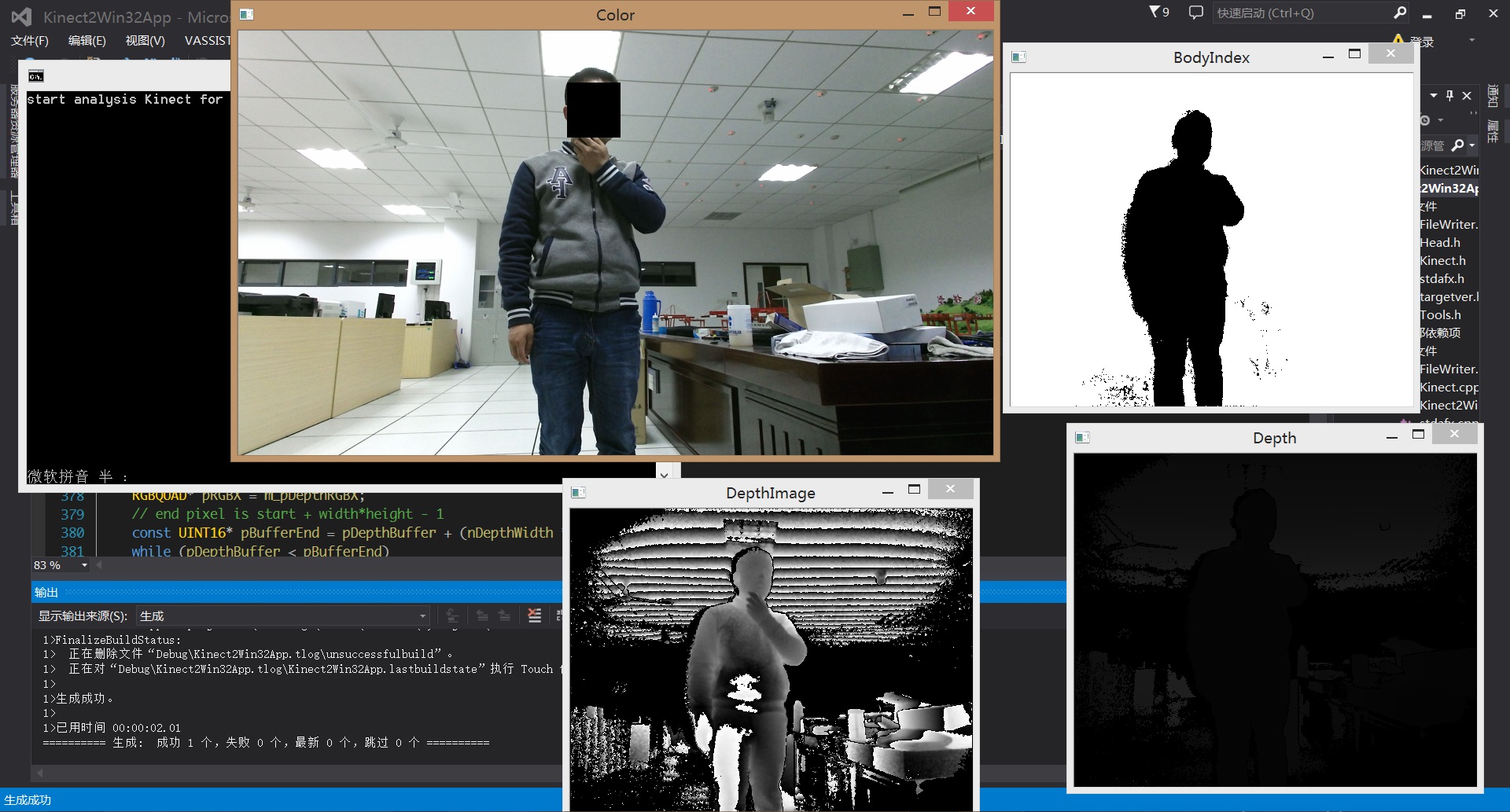

需要注意的是,Skeleton我并没有做显示处理,仅仅是存储了数据,有需要的人自己想办法显示喽。本程序最终结果图像:

以下图片是网友visitor538791785通过map color 到depth得到的图像(就是找到color图像中对应的深度数据,很显然由于摄像头视差color图像不可能都能找到对应的深度数据,对于无效值他用红色标识出来了。),相关代码可以看评论区找到:

李逍遥说说

李逍遥说说

![[转]利用Kinect把任意屏幕变得可触控-李逍遥说说](https://brightguo.com/wp-content/uploads/2017/04/o_wite-220x150.png)

![[转]基于kinect的体感遥控机械臂仿真-李逍遥说说](https://brightguo.com/wp-content/uploads/2017/03/QQ截图20170321225632-220x150.jpg)

![[转]解决KinectV2驱动问题-李逍遥说说](http://pterneas.com/wp-content/uploads/2017/02/kinect-drivers-update.png)